It is funny, because there shouldn't be any increase in data doing that, but it picks up text sometimes that way that is missed when just grabbing directly off the screen. I have seen some improvement by taking a MacOS screen shot first (command-shift-4, then click the little screenshot icon in the corner of the screen so it is enlarged mid-screen, then use PicaText on that). ML definitely can be done on a laptop (heck, phones do ML pretty well) the downside is not as much data being fed into it as Google gets. Still, PicaText could improve for any one user over time (and perhaps allow an opt-in to upload the corpus of learned patterns to be broadcast out universally on the next version update) by incorporating a locally-run ML mechanism.

That said, ML is really most practical on a cloud system where it is learning from everyone in the world instead of just the corrections *I* make, which is essenially why Google OCR is so darned good.

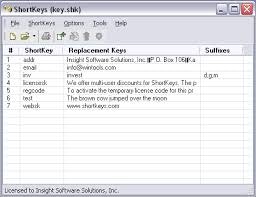

These days to improve recognition of those situations I'd use a little bit of ML and user correction feedback (ie, app scans as "Torn" and I correct it to "Tom" then the pattern it scanned as "rn" gets added as a "less likely rn, more likely m" lesson and over time it improves on the fonts people actually use). Places where it will often fail on standard screen fonts are 'm' and 'rn' distinctions (when I clip the Participants list in a Zoom window, my name is often "Torn" instead of "Tom"), adding/removing '.' and spaces, and smaller text.

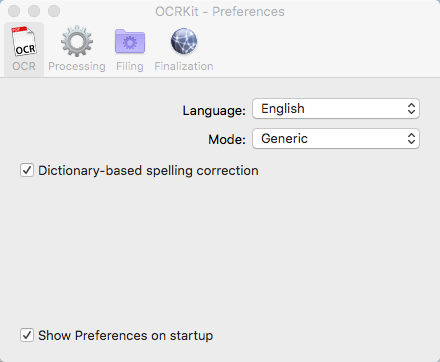

It isn't as accurate as a Google-based OCR, but the main advantage is that *nothing leaves your computer*, which makes it a viable product to use when dealing with text that isn't compatible with a cloud networking solution, or when you don't have a network connection at all. PicaText works "pretty well", which requires some extra explanation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed